Force-enhanced MR Remote Collaboration [IEEE TVCG]

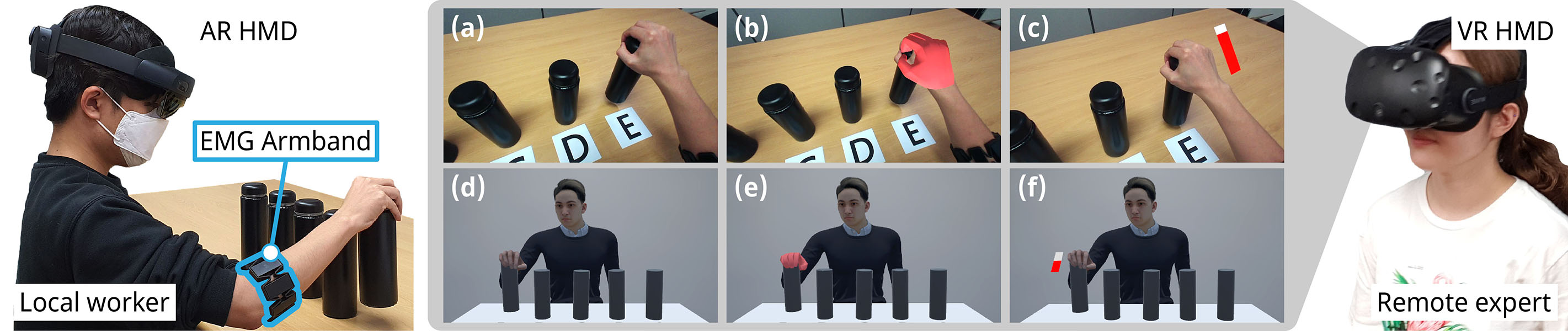

We present a prototype system for sharing user’s hand force in mixed reality (MR) remote collaboration on physical tasks, where hand force is estimated using wearable surface electromyography (sEMG) sensor. Hand activity is an essential component of remote collaboration, yet the force exerted by users’ hands has not been extensively investigated. Our sEMG-based system reliably captures the user’s hand force during physical tasks and conveys this information to remote collaborator through hand force visualization, overlaid on the local user’s view or on the avatar. A user study was conducted to evaluate the impact of sharing hand force on collaboration, employing three distinct visualization methods across two view modes. Our findings demonstrate that sensing and sharing hand force in MR remote collaboration improves the remote expert’s understanding of the worker’s task, significantly enhances the expert’s perception of the collaborator’s hand force and the weight of the interacting object, and promotes a heightened sense of social presence for the remote expert. We also provide design implications for future mixed reality remote collaboration systems that incorporate hand force sensing and visualization.